Faster release with Maven CI Friendly Versions and a customised flatten plugin

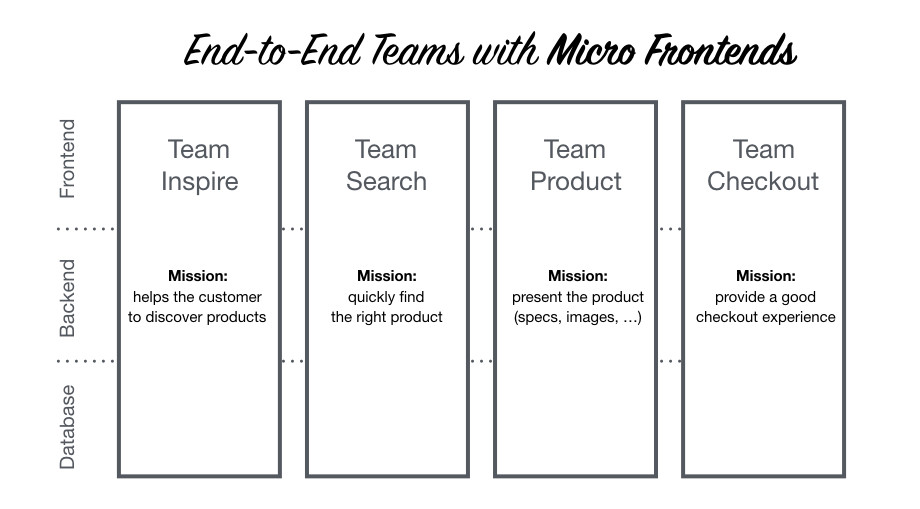

Fed up with waiting for the maven release? We’ve found a way to cut the release time by half. Each of our teams at Outbrain is responsible for its own service code in its own repository. However, our teams also share a large Maven-based repository that contains modules (libraries) that get released as Maven artifacts. After a module is released, it can be used by the teams. Thus, the shared repository — in contrast to service code, which is managed within individual team repositories — serves as a centralised place to manage team libraries.

Since our shared repository has hundreds of modules managed by multiple teams, we started to face failures during release, and release time increased dramatically. We decided to tackle this issue to boost efficiency.

The solution we came up with was to move to the Maven Ci Friendly Versions, which eliminate race conditions. (The Maven release plugin involves a git commit phase to change the pom.xml versions, but the change cannot get pushed if the commit hash set is out of date, prompting the build to fail.)

Moreover, we stopped using the Maven Release Plugin, accelerating our release process.

Bye Bye, Release Plugin.

Until recently, we had been using the Maven Release Plugin in order to release our libraries. The plugin has a two-step process, with different commands involved (release:prepare, release:perform).

The “prepare” and “perform” goals involve building the project multiple times. Moreover only one release can be triggered at a time — i.e., multiple releases cannot run simultaneously due to race conditions that were described earlier — you must wait for the current release to complete before starting the next. For projects that, like ours, have long build times, this is a deal-breaker. The Maven release plugin took far too long to run.

Welcome, Maven CI-Friendly versions

The approach we took is lightweight compared to the Maven release plugin approach and allows for multiple releases to be triggered and run simultaneously.

Here are the advantages of this approach over using the release plugin:

The Maven CI-Friendly Setup

The structure of our Monorepo (which follows a parent-child hierarchy) allowed us to easily transform all our pom.xml files from hard-coded versions to ${revision} properties as our artifact versions, which can be overridden as well.

In order to avoid redefining the revision property for each module, we defined the revision property in the parent pom.xml.

Here is a child pom.xml:

<project>

<parent>

<artifactId>ci-friendly-parent</artifactId

<groupId>com.outbrain.example</groupId>

<version>${revision}</version>

</parent>

<artifactId>ci-friendly-child</artifactId>

<name>CI Friendly Child</name>

</project>

And this is the parent pom.xml:

<project>

<groupId>com.outbrain.example</groupId>

<artifactId>ci-friendly-parent</artifactId>

<name>CI Friendly Parent</name>

<version>${revision}</version>

<properties>

<revision>1.0.0-SNAPSHOT</revision>

</properties>

</project>

As you can see, we moved to the CI Friendly Versions using revision property, and we are now set up to issue a local build to verify that the definition is correct.

To issue a local build, which will not be published, we invoked

mvn clean package as usual. This resulted in the artifact version 1.0.0-SNAPSHOT.

Want to change the artifact version? Easy.

Use the following command:

mvn clean package -Drevision=<REPLACE_ME>

The Maven release plugin used the revision placed in the pom.xml to define the next revision for release. If a development pom.xml holds a version value of 1.0-SNAPSHOT then the release version would be 1.0.

This value is then committed to the pom.xml file.

Finally, we can avoid those commits and hard-coded versions in pom.xml files.

Install/Deploy

In the Maven Ci Friendly Versions guidelines it is mentioned that the flatten maven plugin is necessary if you want to deploy or install your artifacts. Without this plugin the artifacts generated by this project cannot be used by other Maven projects.

This is true. But, the problem is that the flatten Maven plugin coupled with the “resolveCiFriendliesOnly” option does not work as expected due to bugs. Maven’s flatten plugin is a somewhat over-engineered, overly complex plugin that did not fit our needs. As adherers of the Unix philosophy, we decided to create our lightweight custom plugin, the ci friendly flatten maven plugin that replaces only the ${revision}, ${sha}, and ${changelist} properties.

The final pom.xml

</project>

<project>

<groupId>com.outbrain.example</groupId>

<artifactId>ci-friendly-parent</artifactId>

<name>CI Friendly Parent</name>

<version>${revision}</version>

<properties>

<revision>1.0.0-SNAPSHOT</revision>

</properties>

<modules>

...

</modules>

<build>

<plugins>

<plugin>

<groupId>com.outbrain.swinfra</groupId>

<artifactId>ci-friendly-flatten-maven-plugin</artifactId>

<version>FIND_HERE</version>

<executions>

<execution>

<goals>

<goal>clean</goal>

<goal>flatten</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>

Building the bridge to Maven Ci Friendly Versions

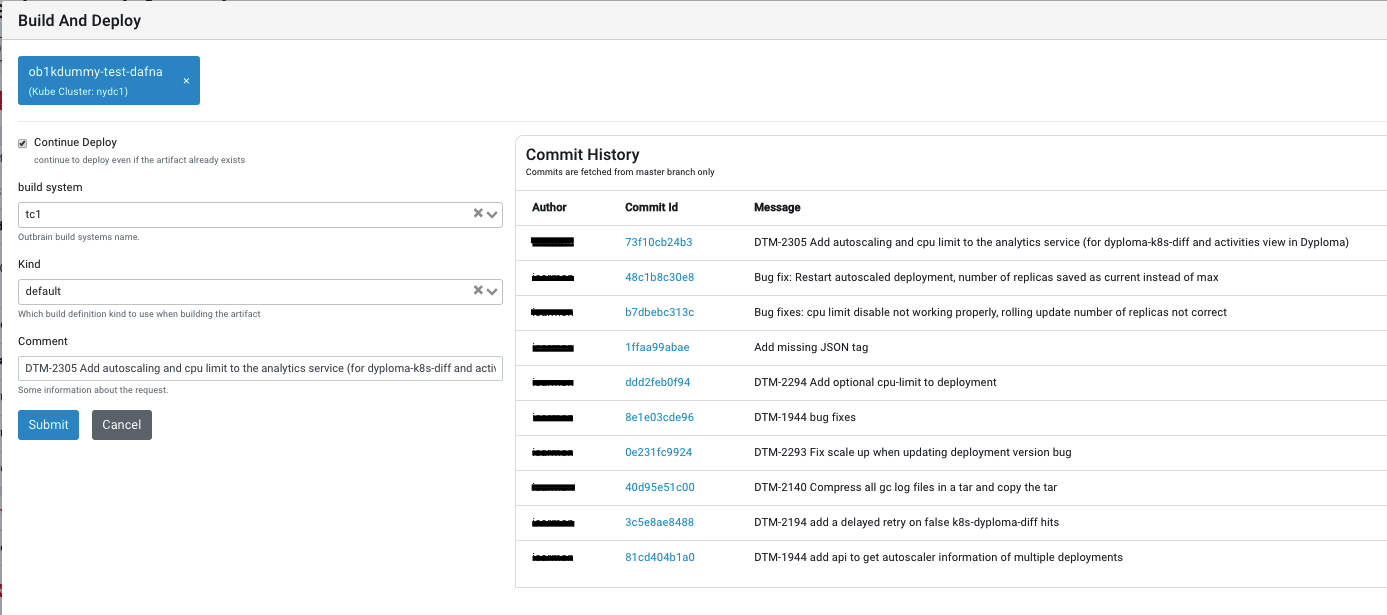

We decided to transition to Maven Ci Friendly Versions. As I mentioned before, due to the bugs in the flatten plugin, we first needed to develop our custom flatten plugin. In our case, we used TeamCity to release our libraries, fetching the release version from git and tagging it (but it is worth noting that this process is suited to other build systems as well).

So, what do the release steps look like?

- Fetch the latest git tag, increment it, and write the result to revision.txt file. This is the version we are going to release.

mvn ci-friendly-flatten:version

2. Set a system.version TeamCity parameter for our soon-to-be-released version, needed in order to use this version in the steps that follow.

#!/bin/bash -x

VER_PATH=”%teamcity.build.checkoutDir%/revision.txt”

REV=`cat $VER_PATH`

set +x

echo “##teamcity[setParameter name=’system.version’ value=’$REV’]”

3. Deploy the jars with the new version.

mvn clean deploy -Drevision=%system.version%

4. Tag the current commit with the updated version and push the tag.

mvn ci-friendly-flatten:scmTag -Drevision=%system.version%

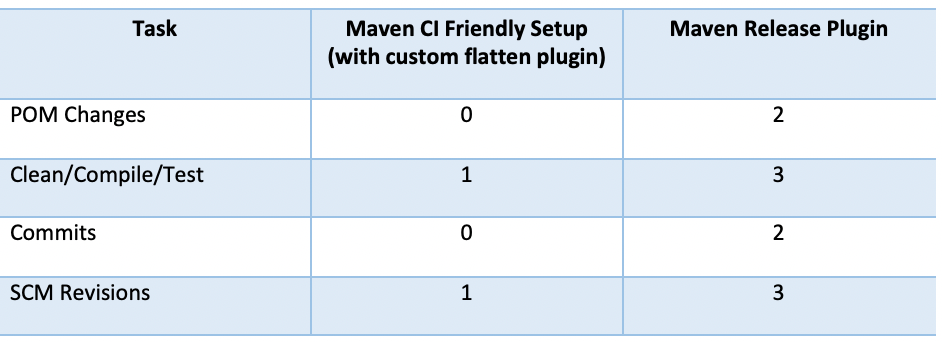

The Maven-Release Plugin has two commits and, thus, triggers Clean/Compile/Test multiple times. In contrast, the new release process relying on our custom flatten plugin has zero commits, effectively eliminating build failures caused by race conditions in committed code during the build.

Moreover, in this release process, the goals Clean/Compile/Test are executed only once.

Overall, our new approach slashed release time by 50%, from 6+ min to 3+ min.

As a last note, we’ve employed this approach in other projects as well. So, while the time savings on this project was 3 minutes, in another project that was originally 22 minutes, the custom flatten plugin cut 11 minutes off the build.

So, take it from us. There is no need to get bogged down by Maven flatten plugin. Save yourself the headache. All you need is to switch to the Maven Ci Friendly Versions with our custom Ci Friendly Flatten Maven Plugin.