The background

In the last month, we have started, in Outbrain, to test ScyllaDB. I will tell you in a minute what ScyllaDb is and how we came to test it but I think what is most important is that ScyllaDB is a new database at its early stages and still before its first GA (coming soon). It is not an easy decision to be among the firsts to try such a young project that not many have used before (up until now there are about 2 other production installations) but as they say, someone have to be the first one… Both ScyllaDB and Outbrain are very happy to openly share how the test goes, what are the hurdles what works and what not.

In the last month, we have started, in Outbrain, to test ScyllaDB. I will tell you in a minute what ScyllaDb is and how we came to test it but I think what is most important is that ScyllaDB is a new database at its early stages and still before its first GA (coming soon). It is not an easy decision to be among the firsts to try such a young project that not many have used before (up until now there are about 2 other production installations) but as they say, someone have to be the first one… Both ScyllaDB and Outbrain are very happy to openly share how the test goes, what are the hurdles what works and what not.

How it all began:

I know the guys from Scylla for quite some time, we have met through the first iteration of the company (Cloudius-systems) and we’ve met at the early stages of ScyllaDB too. Dor and Avi, the founders of ScyllaDB, wanted to consult if as heavy users of Cassandra, we will be happy for the solution they are going to write. I said, “Yes, definitely” and I remember saying, “If you will give me Cassandra functionality and operability at the speed and throughput of Redis, You got me.”

Time went by and about 6 months ago they came back and said they are ready to start integrations with live production environments.

This is the time to tell you what ScyllaDB is.

The easiest description is “Cassandra on steroids”. That’s right but in order to do that, the guys in Scylla basically had to write all Cassandra server from scratch, meaning:

- Keep all Cassandra interface perfectly the same so client applications will not have to change.

- Write it all over in C++, and by that overcome the issues that JVM brings with it, mostly no GC that was hurting the high percentiles of latency.

- Write it all in Asynchronous programming model that enable the server to run in very high throughput.

- Shard per core approach – on top of the cluster sharding, Scylla uses shard-per-core which allows it to run lockless and scale up with the number of cores

- Scylla uses its own cache and does not rely on the operating system cache. It saves data copy and does not slow down due to page faults

I must say that was intriguing my mind as if you are looking at OpenSource NoSQL data systems that picked up, there is one camp of C++, High performance but, yet, simple functionality (memcached or redis) and the heavy functionality but JVM based camp (Spark, Hadoop, Cassandra). However if you can combine the good of both worlds – it sounds great.

Where does that meet Outbrain?

Outbrain is a heavy user of Cassandra. We have few hundreds of Cassandra machines running in 20 clusters over 3 datacenters. They store 1-2 terabytes of data each. Some of the clusters are being hit on user’s query time and unexpected latency is an issue. As data, traffic and complexity grew up with outbrain it became more and more complex to maintain the cassandra clusters and keep them up to reasonable performance. It always required more and more hardware to support the growth as well as the performance.

The promise of getting stable latency, 5-10x more throughput (much less machines)without the cost of re-writing our code made a lot of sense and we decide to give it a shot.

One thing was not yet in the product that we needed deeply was Cross DC clusters. The Cassandra feature of eventual consistency across different clusters in different Data Center is key to how Outbrain operates and it was very important for us. It took the guys from ScyllaDB a couple of months to finish that feature, test and verify all works and we were ready to go.

ScyllaDB team is located in Herzliya which is very close to our office in Netanya and they were very happy to come and start the test.

The team working on this test is:

Doron Friedland – Backend engineer at Outbrain’s App Services team.

Evgeny Rachlenko – from Outbrain’s Data Operations team.

Tzach Liyatan – ScyllaDB Product manager.

Shlomi Livne – ScyllaDB VP of R&D.

The first step was to allocate the right cluster and functionality we want to run the test on. After a short consideration we chose to run this comparison test on the cluster that holds all our Documents store. It holds all information about all active documents in Outbrain’s system. We are talking about few millions of documents where each one of them have hundreds of different features represented as Cassandra columns. This store is being updated all the time and being accessed in every user request (few million requests every minute). Cassandra started struggling with this load and we started applying many solutions and optimizations in order to keep the load. We also enlarged the cluster so we can keep it up.

One more thing that we did in order to overcome the Cassandra performance issues was to add a level of application cache that consumes few more machines

by itself.

One can say, that’s why you chose a scalable solution like Cassandra so you can grow it as you wish. But when the number of servers start to rise and have significant cost, you want to look at other solutions. This is where ScyllaDB came into play.

The next step was to install a cluster, similar in size to the production cluster.

Evgeny describes below the process of installing the cluster:

Well, the installation impressed me in the two aspects.

Configuration part was pretty same to Cassandra with few changes in parameters.

Scylla simply ignoring GC, or HEAP_SIZE parameters and use configuration as extension of cassandra.yaml file.

Our Cassandra’s clusters running with many components integrated into outbrain ecosystem. Shlomi with Tzach has defined properly the most important graphs and alerts. Services such as consul, collectd, prometheus with graphana also has been integrated as part of POC. Most integration test passed without my intervention except light changes in the Scylla chef’s cookbook.

Tzach is describing what it looked like from their side:

Scylla installation, done by Evgeny, was using a clone of Cassandra Chef recipes, with a few minor changes. Nodetool and cqlsh was used for sanity test of the new cluster.

As part of this process, Scylla metric was directed to OutBrain existing Prometheus/ Grafana monitoring system. Once traffic was directed to the system, the application and ScylladDB metrics was all in one dashboard, for easy comparison.

Doron is describing the application level steps of the test:

-

- Create dual DAO to work with ScyllaDB in parallel to our Cassandra main storage (see elaboration on the dual DAO implementation below).

- Start dual writes to both clusters (in production).

- Start dual read (in production) to read from ScyllaDB in addition to the Cassandra store (see the test-dual DAO elaboration below).

- Not done yet: migrate the entire data from Cassandra to ScyllaDB by streaming the data into the ScyllaDB cluster (similar to migration between Cassandra clusters).

- Not done yet: Measure the test-reads from ScyllaDB and compare both the latency and the data itself – to the data taken from Cassandra.

- Not done yet: In case the latency from ScyllaDB is better, try to reduce the number of nodes to test the throughput.

Performance metrics:

Here are some very initial measurement results:

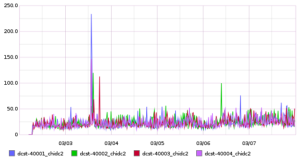

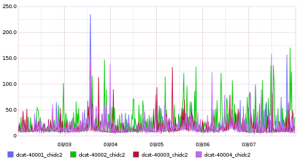

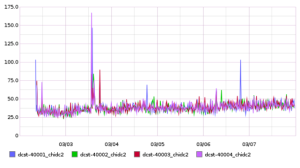

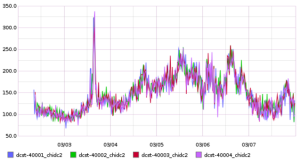

You can clearly see below that ScyllaDB is performing much better and in a much more stable performance.

One current disclaimer here is that Scylla still does not have all the historic data and just using data of the last week.

It’s not visible from the graph but ScyllaDB is not loaded and thus spends most of the time idling, the more loaded it will become, latency will reduce (until a limit of course).

We need to wait and see the following weeks measurements. Follow our next posts.

Read latency (99 percentile) of single entry: *First week – data not migrated (2.3-7.3):

Scylla:

Cassandra:

Read latency (99 percentile) of multi entries (* see comment below): *First week – data not migrated (2.3-7.3):

Scylla

Cassandra

* The read of multiple partitions keys is done by firing single partitions key requests in parallel, and waiting for the slowest one. We have learned that this use-case extremes evert latency issues we have in the high percentiles.

That’s where we are now. The test is moving on and we will update with new findings as we progress.

In the next post-Doron will describe the Data model and our Dual DAO which is the way we run such tests. Shlomi and Tzach will describe the Data transfer and upgrade events we had while doing it.

Stay tuned.

Next update is here.

The graphs do not specificy Y-axis unit. Microseconds?

It is very interesting article. Stable read performance looks good. As far as I understood given read benchmark proves that Cassandra “worst” cases are much slower than ScyllaDB. it will be nice to know – what was “average” case?

Another question things which would help to compare these two technologies – what replication factors where used by both clusters? What was consistency requirements?

One more question, if possible – which deign decisions in the ScyllaDB gave this significant performance gains?

This is very interesting.

A minor note, on the latency charts you are using different scales comparing 99 percentiles. ScylaDB is on 0 to 175, Cassandra is on 50 to 350.

This makes it less obvious (to your average skim reader of blog posts) that ScylaDB latency is mostly below 50ms while Cassandra is consistently above 100ms.

For your consideration 🙂

Thanks for sharing the info.

Was wondering how this test finally went and if you would be open to publish your findings. Cheers,

Pingback: ScyllaDB | OutBrain: We are testing ScyllaDB – live blogging #1